Introduction

On Jul 13, 2019, my colleague Lee and I attended a short-term program at Tsinghua University to learn the implementation of deep learning techniques for industrial robots. We are engineers at PIX Moving and we aim to apply Machine Learning to improve Wire-Arc additive manufacturing (WAAM) process. This post records our learning.

Reasoning

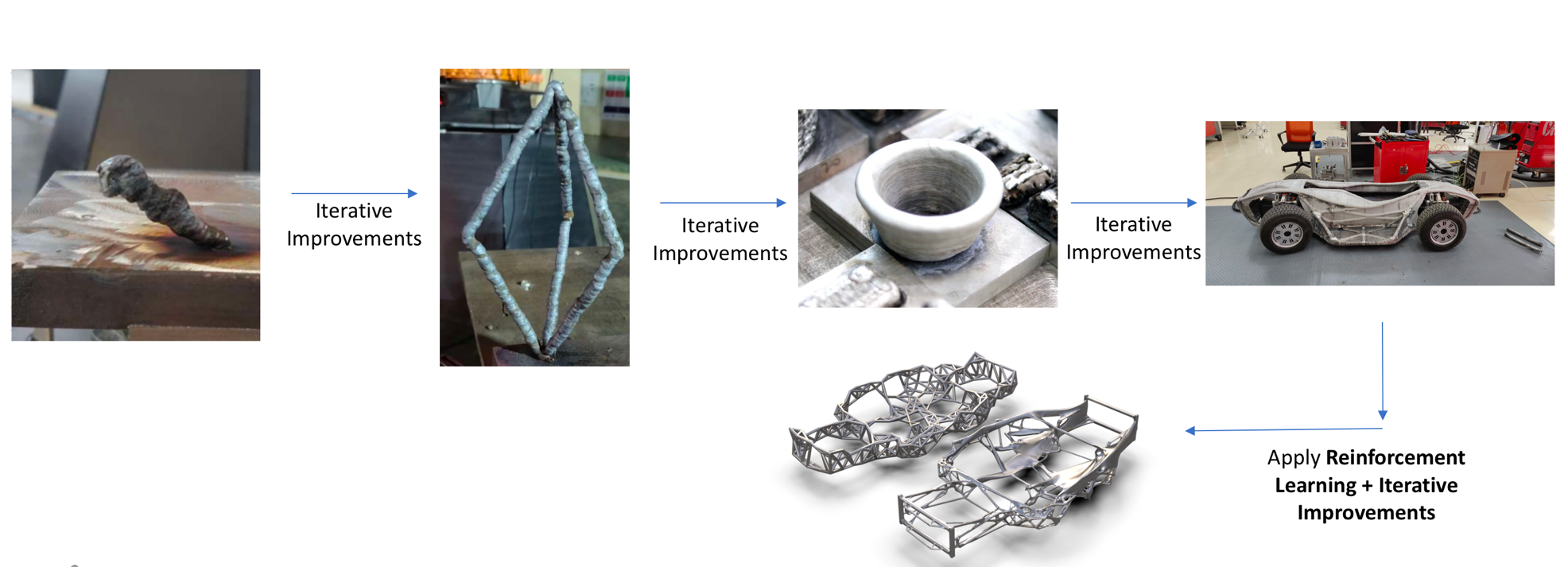

In its basic form, WAAM is application of robotic welding technology to metallic 3D printing. Because of inherent limitations of welding technology, such as the size of weld bead, WAAM is not a net-shape process (post-machining is needed) and cannot match the complexity of shapes achieved by powder-based technologies. The final print quality, when measured by surface waviness and dimensional accuracy, depends on many parameters like wire feed speed, current, robot travel speed, tool-path plan and work distance. The interaction between these parameters and the final achieved shape is complex and requires extensive experimentation to be understood. We believe that a reinforcement learning — based model can learn to understand this relationship and lead to better print quality.

Metal 3D Printing at PIX factory-Chassis Being 3D-printed (WAAM)

WAAM at PIX

Problem Statement

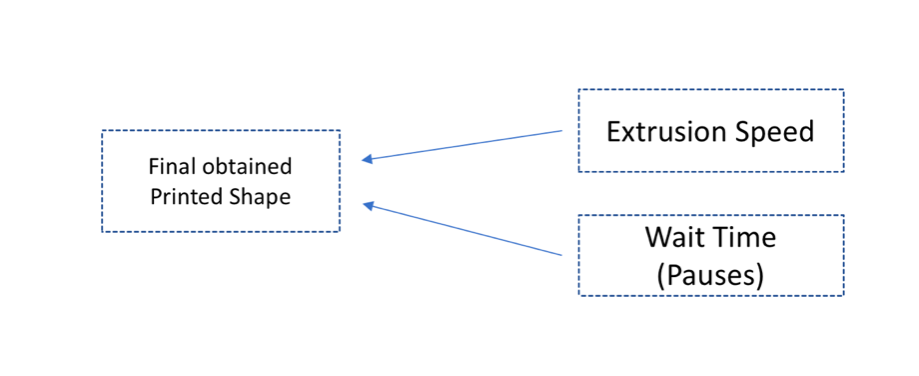

The problem involved training a KUKA KR6 900 industrial robot to print planar plastic wire strands of desired shape between two end points. Only the desired shape needs to be fed as input and the robot must figure out the required input parameters needed on its own. So, the robot must be trained to find the relationship between input parameters and the final obtained shape.

To solve this problem, a deep learning model was developed based on CNN (Convolutional Neural Network) and LSTM (Long Short-Term Memory). The neural networks were chosen because they have been proven to work well on image-based problems. The 2D nature of the problem allows us to collect data in the form of images and use it to train the deep learning algorithm.

3D Printing a plastic strand

Solution Technique

The training data is collected in the form of images. The robot is trained on 50 experiments and in every experiment, it prints a planar curve. The input parameters for each printed curve are randomly varied using a custom software tool developed on rhino-grasshopper, thereby leading to varied curve designs for each experiment. The effect of only two input parameters were considered, extrusion speed and pause time.

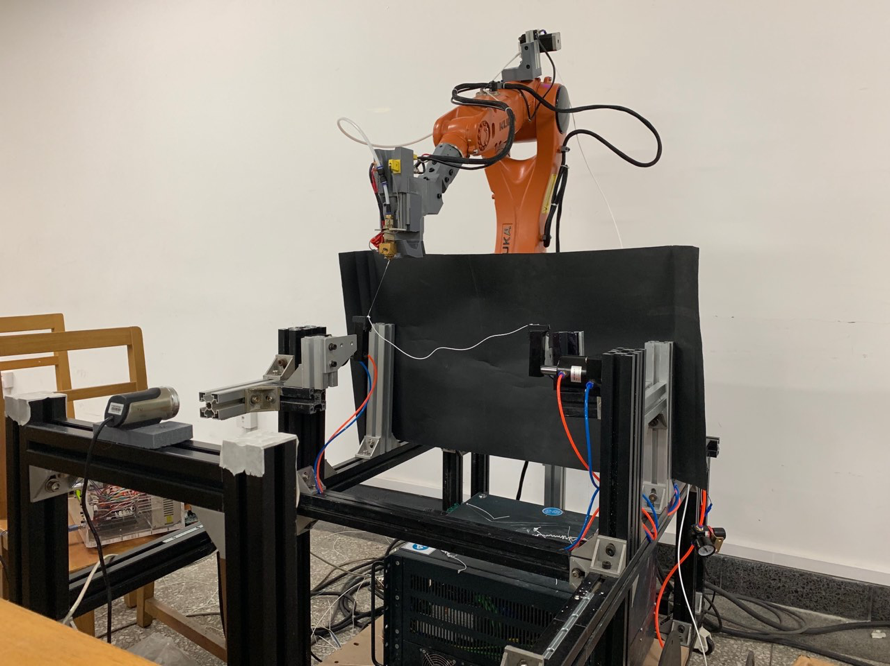

The Setup

The following tools were used in order to train the robot —

- The robot (KUKA KR6 900)

- Camera

- 3D-printing controller — Custom designed with Arduino

- Experimental Test stand

The entire setup allows rapid experimentation and data collection. A code written in Processing 2 language controls the timing of the camera for taking the images. Inexpensive ABS plastic filament is used as the feedstock for these experiments. An Arduino controller controls the filament feed rate and temperature based on data from the G-code. Once the setup is ready and G-code instructions are fed, the experimental process is quite automatic. However, sometimes, human intervention is required to clean the experimental printed piece before the start of a new experiment.

Experimental Setup (at Tsinghua University)

3D Printing curves

Data Processing and Preparation

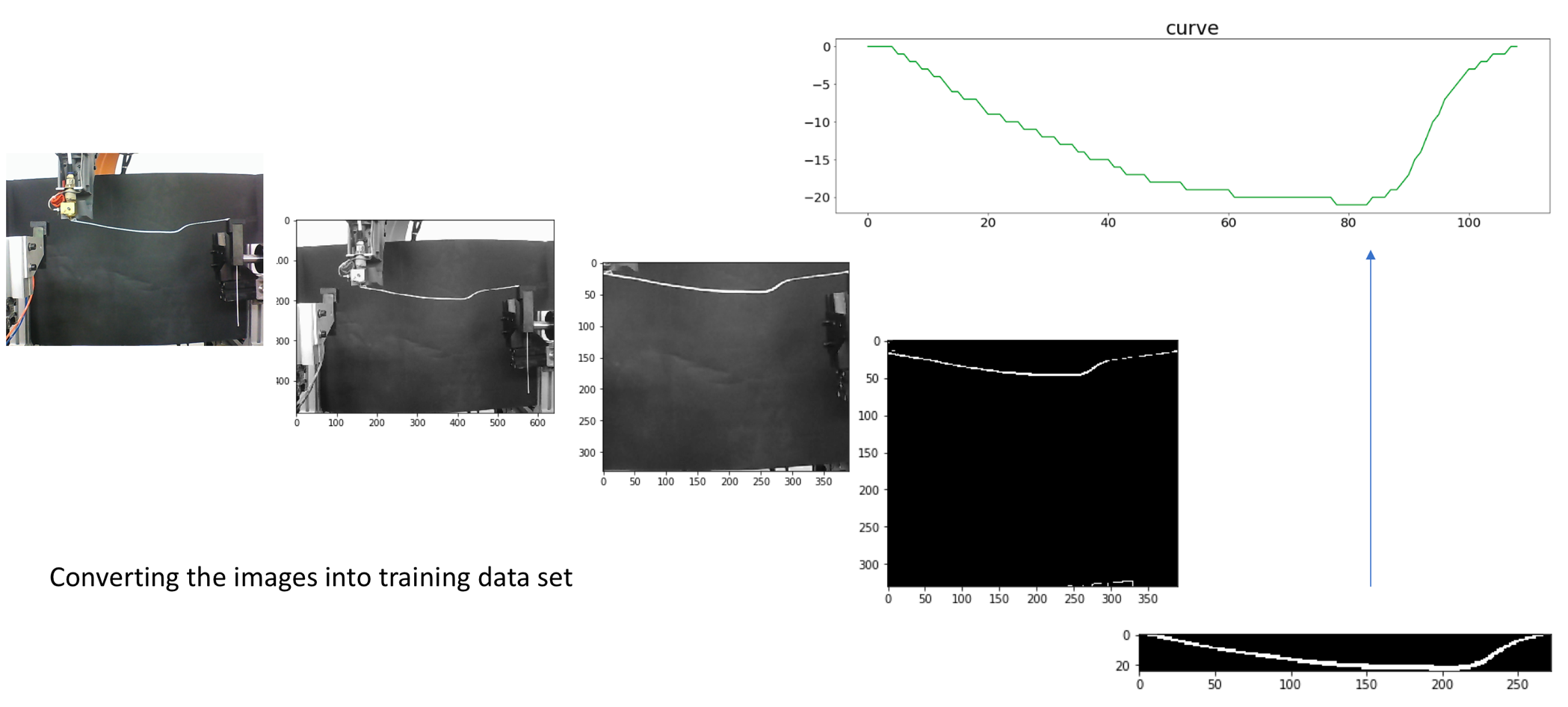

A total of fifty experiments were performed for data collection and for each experiment, sixty one images were collected at regular time intervals. The images were then processed into numeric data consisting of z-coordinates (heights) of the curve at various points through a python code, forming our training data set.

Image processing

Every image is then converted into a tabular data consisting of three columns — Extrude speed values, Pause Time Values and curve coordinate values. The first two columns are merged to form a 3-dimensional array so that we just deal with two arrays — the input array and the output array. The training dataset is now ready.

Training Neural Networks and Validation

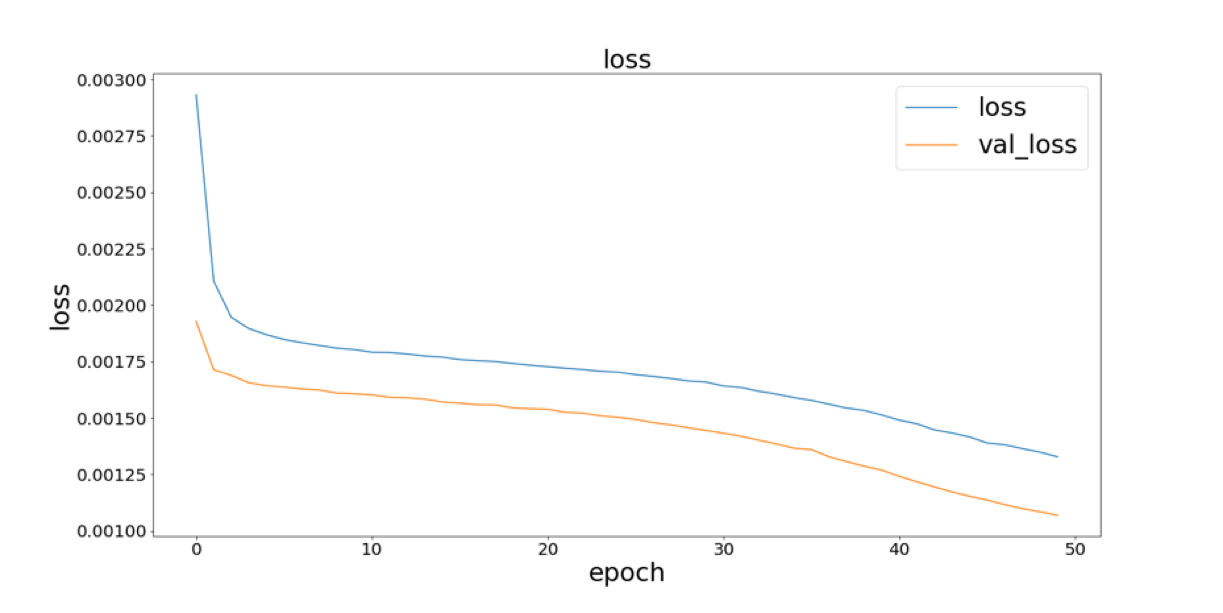

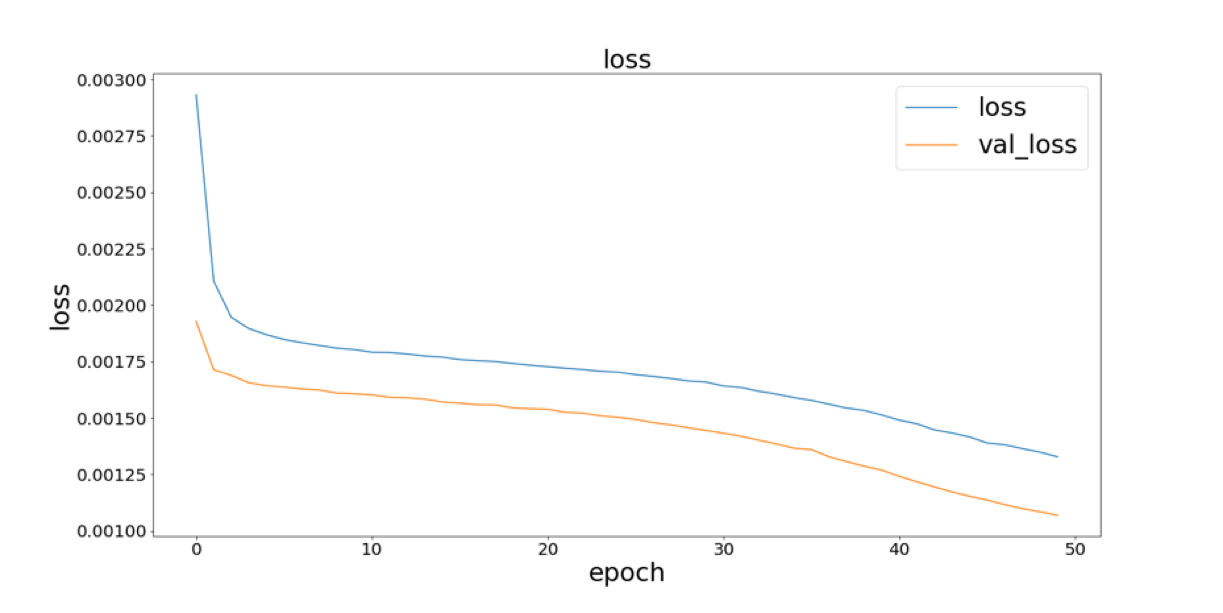

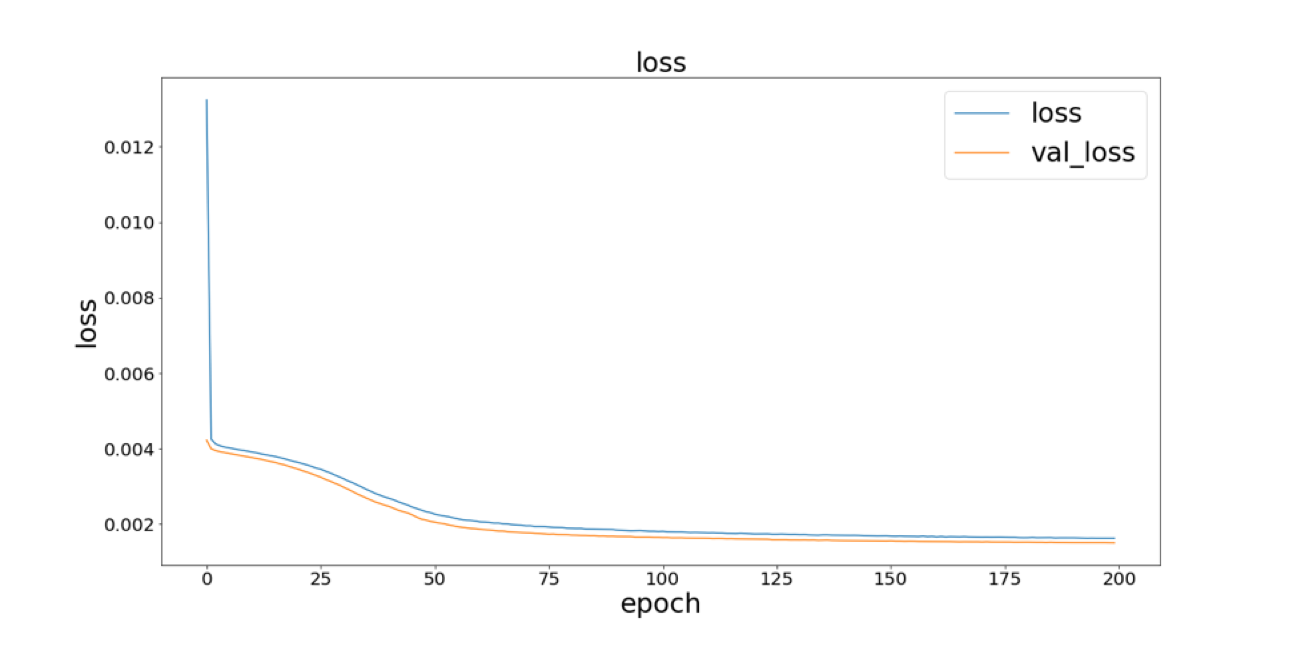

A simple Neural Network, a Convolutional Neural Network (CNN) and a Long Short-term Memory Neural Network (LSTM) were employed through the Keras framework with Tensorflow as backend. Keras and Tensorflow are open-source, python-based tools that simplify building such neural networks. Parameters such as batch size, epochs and drop-out rate were tuned to get the best possible outcome. After plotting the losses vs the epochs, the LSTM technique showed to be most effective and is chosen as the deep learning model for subsequent validation and deployment.

NN

CNN

LSTM

Losses for NN, CNN and LSTM

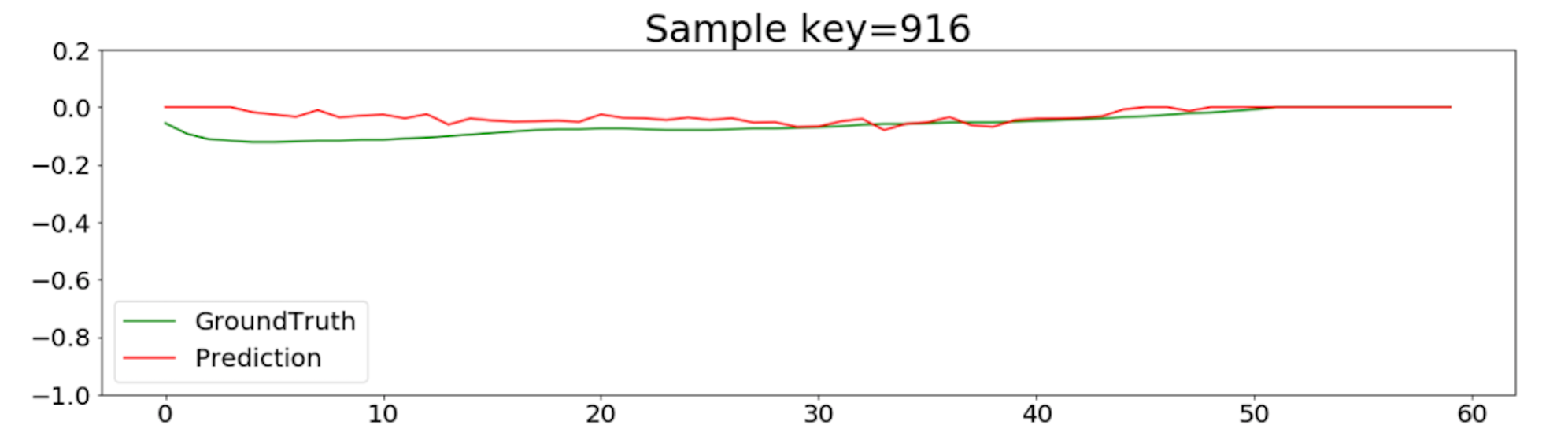

The LSTM model is then validated from a test data set and shows that good prediction has been achieved. The following plot is for a random sample from a test dataset. The green line represents the NN-predicted input parameters required to make the curve in the test sample data, whereas the red line represents the actual input parameters that were used. The prediction is quite good and acceptable!

Validation

Final Use

The Industrial robot can now use this model to decide what input parameters (G — code) are needed to make a user-defined shape. Here is a 3D printed part made using the trained robot!

3D printed using the LSTM — trained robot

Discussion

This project gave us an idea of how we can apply deep learning to industrial robots. Industrial Robots that can be trained to see, understand and make decisions are now in development and Fanuc has taken a lead in this direction. At PIX, we see a potential in this technology to improve our metallic 3d printing process (WAAM). However, instead of neural networks, we now aim to apply Reinforcement Learning(RL). The underlying mechanism of RL of determining idle conditions and maximizing performance by rewarding or punishing its attempts to learn, makes it more suitable for training industrial robots. Applying this technique will help us do away with the time and money we spend in experimentation and frequent manual intervention to ensure good 3D-print quality.

This Article is contributed by PIX Mechanical Engineer Siddharth

Mechanical Engineer. Majored in Aerospace Engineering, Structures and Materials, graduated from University of Michigan.